Can AI Humanizers Be Detected Safely

Can AI Humanizers Be Detected? What Really Happens Behind the Scenes

The honest answer about AI detection, humanizers, and what actually works

🔍 Real Talk, No Magic Tricks

There is a very normal question that keeps popping up everywhere right now:

Can AI humanizers actually be detected, or do they really make AI text safe?

On paper, the promise sounds perfect. You paste your AI generated text into a tool, press a button, and it comes out sounding more human. Some detectors calm down, the scores look better, and for a moment it feels like the problem is solved.

Real life isn’t that simple.

Detectors keep changing. Different tools give different results. Sometimes a completely human text is flagged as AI. Sometimes AI assisted writing passes as if it was written by a person from scratch. At the same time, teachers, companies and platforms are still trying to decide how much they should trust these scores.

In this article you’re not going to get a magic trick to become invisible. Instead, we’re going to look at what AI detection tools actually try to measure, when AI humanizers can still be detected, and how to use AI in a way that doesn’t keep you in constant fear of being “caught”.

Why People Are So Worried About AI Detection

AI writing tools started as something light and playful. People used them for quick ideas, jokes or small bits of text to unblock their writing. That phase didn’t last long.

Now you have students using AI for essays and assignments. Freelancers use it for client work. Bloggers build entire sites with AI supported content. Office workers paste AI paragraphs into internal reports and presentations. The context changed, but the same question sits quietly in the background:

What happens if someone runs this through a detector and decides I cheated?

For many people the risk is real. A student can face academic penalties. An employee can damage trust with a manager. A writer can lose a client or a long term partnership. That’s why tools that promise to “humanize” AI text feel so attractive. They look like a simple way to reduce this pressure.

On the other side, schools, universities, companies and platforms also feel under pressure. They’re asked to “do something” about AI. Many of them turn to detection tools because they look objective. A numerical score feels easier to act on than a messy conversation about context, intention and level of help.

This mix of fear from both sides is exactly what makes the topic of AI humanizers and detection so sensitive.

What AI Detectors Actually Look At

Most AI detectors don’t read your text in a human way. They’re not judging your opinion or checking if your argument makes sense. They’re usually looking at patterns.

They look at how predictable your wording is, how your sentences are structured and how similar your text feels to what large language models tend to produce. Some tools use ideas like “perplexity” and “burstiness” to describe this behaviour. You don’t need the technical details to understand the core idea, but if you want to go deeper into the technical side, there are independent benchmarks that compare how different AI content detectors perform in real tests.

Patterns That Trigger Suspicion

If a text looks very smooth, highly regular and a bit too balanced, the system may think it looks machine generated. If most sentences have a similar length and rhythm, that can also be a signal. Human writing often has little jumps, changes in pace, repeated phrases, short sentences next to longer ones and small personal details that aren’t perfectly polished.

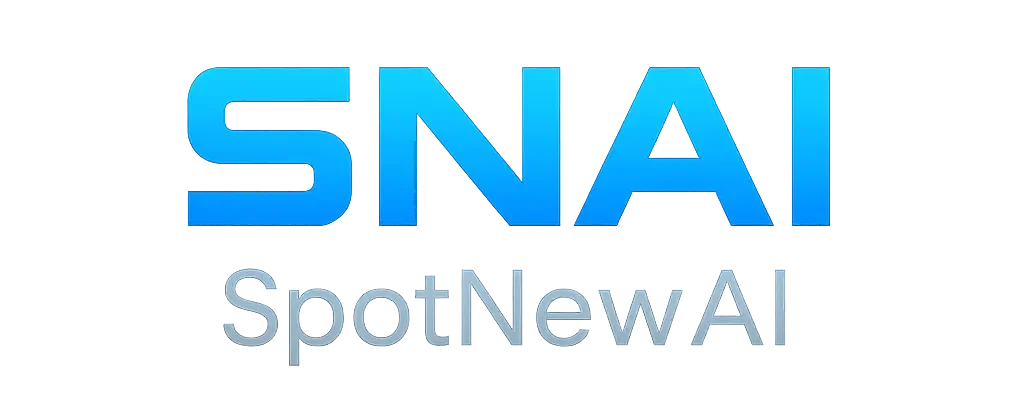

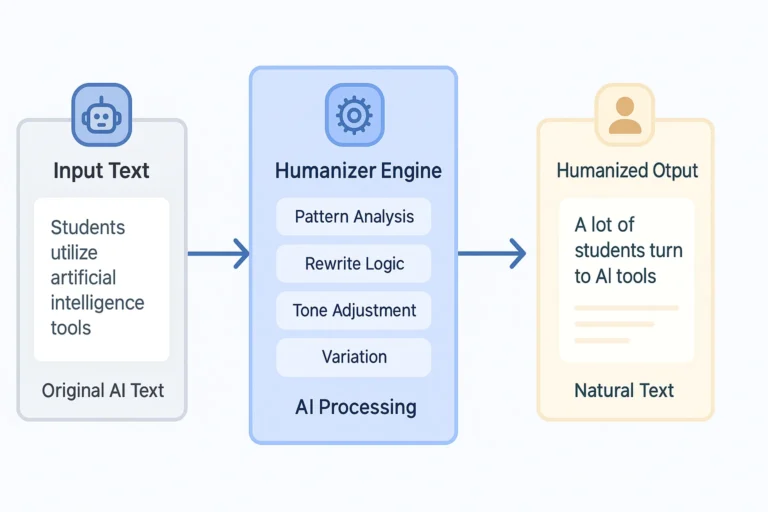

What AI Humanizers Try to Change

AI humanizers try to push the text away from those obvious machine patterns. They might vary sentence length, change some words, adjust the order of ideas and modify the tone. In theory this makes the text harder to classify as AI.

That’s the promise. In practice, things are more complicated.

Why “Humanized” Text Can Still Look Suspicious

There’s no universal definition of what looks “human enough”. Each detector uses its own model, its own training data and its own threshold for what counts as AI or not. A piece of text that looks safe in one detector can look risky in another.

If a humanizer changes the style in a very mechanical way, the result can still feel artificial. The wording may move away from a pure AI pattern, but it might also stop sounding like a real person with a clear voice. This strange middle ground can confuse detectors and human readers at the same time.

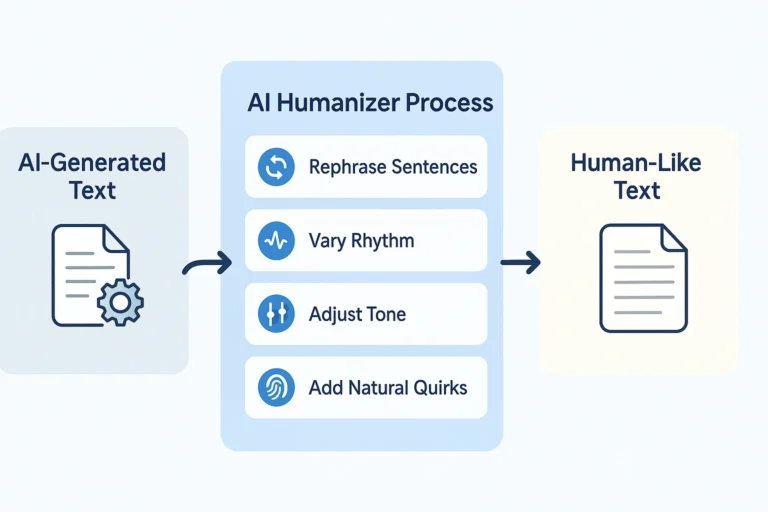

The Problem of False Positives

False positives are a real problem. Human writers sometimes produce very regular and predictable text. For example, non native speakers who rely on safe, repeated structures. Or professionals who follow strict templates because of their job. In these cases, a detector might label honest work as AI assisted, even when no tool was used.

So even after you “humanize” a text, there’s no guarantee that it will look natural to all systems and all people.

So, Can AI Humanizers Be Detected or Not?

The honest answer is that sometimes they can and sometimes they can’t. There’s no clean yes or no.

When Detection Is Still Likely

If you take a very obvious AI text and only run it once through a basic humanizer, detectors will often still have a good chance of spotting it. The core structure, the way ideas are organised and the general feel of the text stay very close to the original model output.

When Detection Becomes Much Harder

In other situations, especially when a person also edits the result, adds their own examples, includes small personal details and rewrites some parts in their natural voice, the text can pass through common detectors without being flagged. At that point it becomes very hard, even for a trained human, to say exactly where the line between AI and human effort sits.

Why There Is No Zero-Risk Scenario

The key point is that no tool can honestly promise zero risk. Detection systems are updated. New models appear. Policies change. A text that looks “safe” today might be scored differently in the future. If your whole strategy is built around staying invisible, you’ll always be standing on unstable ground.

The Hidden Risks People Often Ignore

Most discussions focus on the technical side. People ask if a specific humanizer can bypass a specific detector. That’s only part of the story.

The other part is how people in power use these results. Some teachers, editors and managers treat detection scores as a signal. They start a conversation, look at context and give you a chance to explain. Others treat a single number as proof. That’s where serious problems start.

When Passing a Detector Isn’t Enough

If you rely completely on AI humanizers, you create a second risk. Even if the text passes a detector, you still need to be able to stand behind it. If someone questions your work and asks you to explain your thinking, show drafts or talk through your process, you need something real to show. If you have no idea how the text was actually built, the main issue stops being the detector and becomes your lack of involvement.

Reputation and Trust

There’s also a reputational risk. Once a teacher, client or manager feels that you’re mainly trying to hide AI use instead of using it openly as a tool, it becomes much harder to rebuild trust. Even if you didn’t technically break any written rule, the relationship can still suffer.

Safer Ways to Use AI Without Playing a Cat and Mouse Game

A more sustainable approach is to change the question. Instead of asking “how do I hide this”, it often helps to ask “how can I use this in a way I’m comfortable defending”.

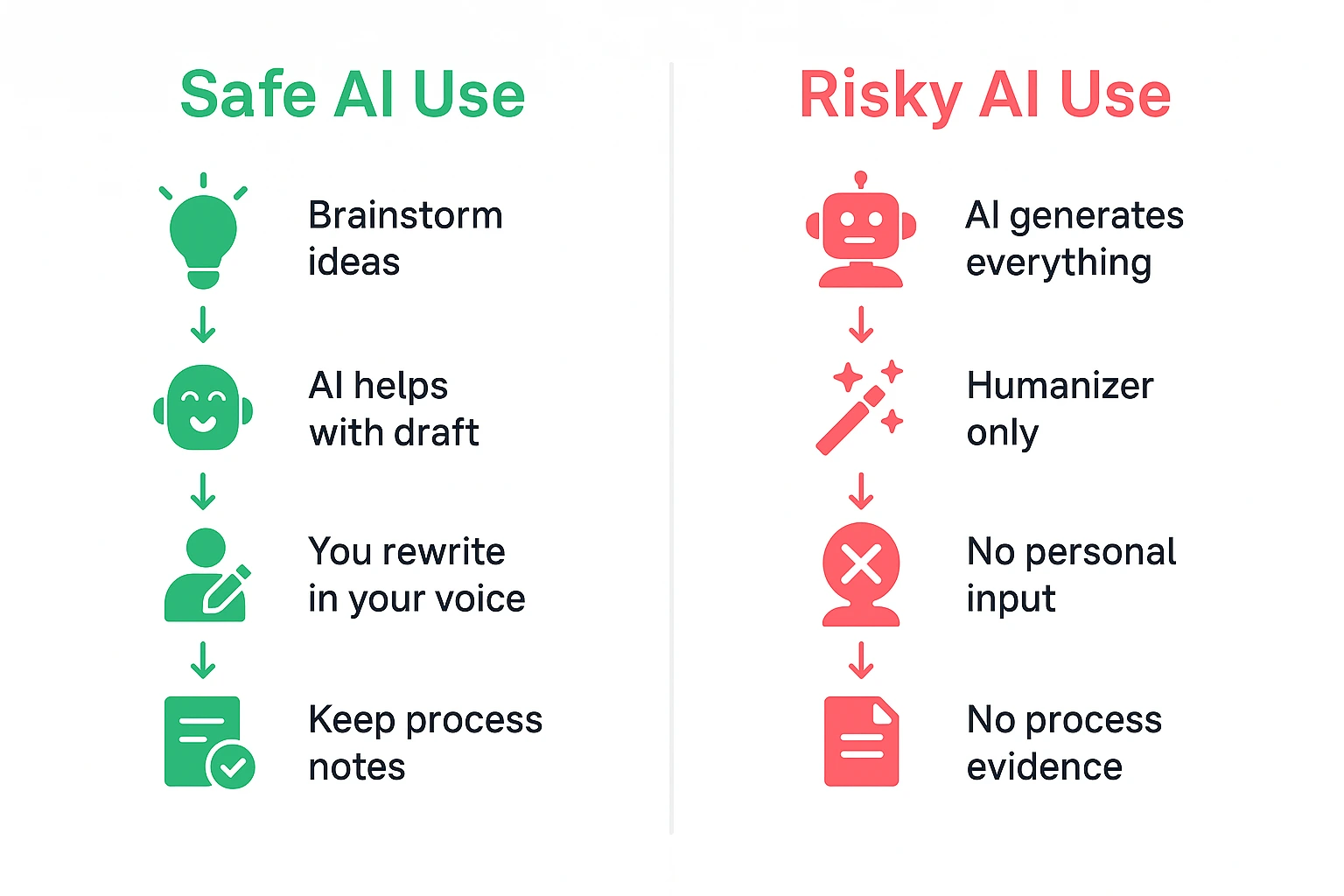

Use AI Earlier in the Process, Not as a Mask

One simple shift is to use AI mainly at the draft or brainstorming stage. Let it help you collect ideas, outline topics, list possible arguments and suggest alternative phrasings. Then write or rewrite the final version in your own words. Keep your own pacing, your own way of explaining things and your own level of detail. If you use a humanizer at all, treat it as a small helper, not as the main engine.

Leave Clear Traces of Your Own Voice

Another useful habit is to leave visible signs of your real voice. Mention a personal experience. Refer to a specific situation you actually lived. Keep some of your natural “imperfections” instead of polishing every sentence into something that sounds generic and anonymous. These elements are hard to fake in a convincing way at scale.

When Transparency Helps

In some contexts, transparency is also becoming more acceptable. There are teachers and companies that are starting to allow responsible AI use, as long as you’re honest about how you used it and you still did meaningful work yourself. Of course, you always need to check the rules of your school, workplace or platform. The bigger trend, however, is slowly moving from pure punishment towards clearer guidelines and shared responsibility.

🎯 Final Thoughts

AI humanizers exist because people are afraid of being misunderstood or punished by imperfect detection tools. That fear is understandable. At the same time, building your entire strategy around avoiding detection will keep you in a constant state of stress.

The technology on both sides keeps moving. Detectors aren’t perfect. Humanizers aren’t perfect. Neither side will ever be completely reliable.

The more useful question is how to keep AI as a tool that supports your work instead of something you have to hide. If your text reflects your own thinking, if you can explain how you created it, and if you’d feel comfortable defending it in a serious conversation, you’re already standing on much more solid ground than any “perfect” score can offer.

✅ Key takeaways

- Can AI humanizers be detected? Sometimes yes, sometimes no – there’s no guarantee

- Different detectors give different results, and they all keep changing

- Passing a detector isn’t enough if you can’t explain your own work

- Use AI early in the process (brainstorming, drafting), then write in your own voice

- Leave traces of your personality and real experiences in your writing

- Focus on being able to defend your work, not on hiding it