Best AI Detector Tools for Teachers: Accuracy & Limitations

Research Methodology: This analysis synthesizes findings from published university studies including Cornell’s arXiv paper on AI detection accuracy and Stanford HAI’s research on bias against non-native English speakers, educator reports across educational forums, and verified classroom experiences from institutions like Vanderbilt and University of Pittsburgh. Information cross-referenced for comprehensive accuracy.

Best AI Detector Tools for Teachers: What Actually Works in Real Classrooms

Look, let’s be real here. AI detector tools for teachers went from “nice to have” to absolutely critical basically overnight when ChatGPT showed up in classrooms.

One semester you’re grading normal student work. Next semester? Half the essays look… weird. Perfect grammar but no soul. Sentences that flow beautifully but say literally nothing. That kid who usually writes like they’re still texting? Suddenly cranking out stuff that sounds like a corporate training manual.

Yeah. We all know what’s going on.

So teachers started looking for detection tools. Simple idea: upload the essay, get a percentage, move on. Should be straightforward for people already buried in grading.

Here’s the problem though. Most of these checkers? They don’t actually work right. University research shows they flag real human writing as AI all the time. They miss super obvious ChatGPT stuff. Same essay uploaded twice can give totally different scores depending on the day.

But a few tools seem to perform decently according to what researchers found. Here’s the breakdown on which AI detector tools for teachers might actually be worth your time, and which ones are gonna cause more headaches than they solve.

Why Teachers Need Reliable Detection (And Why Most Tools Are Kinda Broken)

The numbers are pretty wild. Education forums and surveys show 64% of teachers dealing with students who use AI generators. That’s not a small percentage, that’s the majority of classrooms now.

And yeah, academic integrity matters. When kids just ask ChatGPT to write their papers, they’re missing out on building actual thinking skills. Research backs this up: original writing develops analysis abilities that you can’t get from prompting an AI.

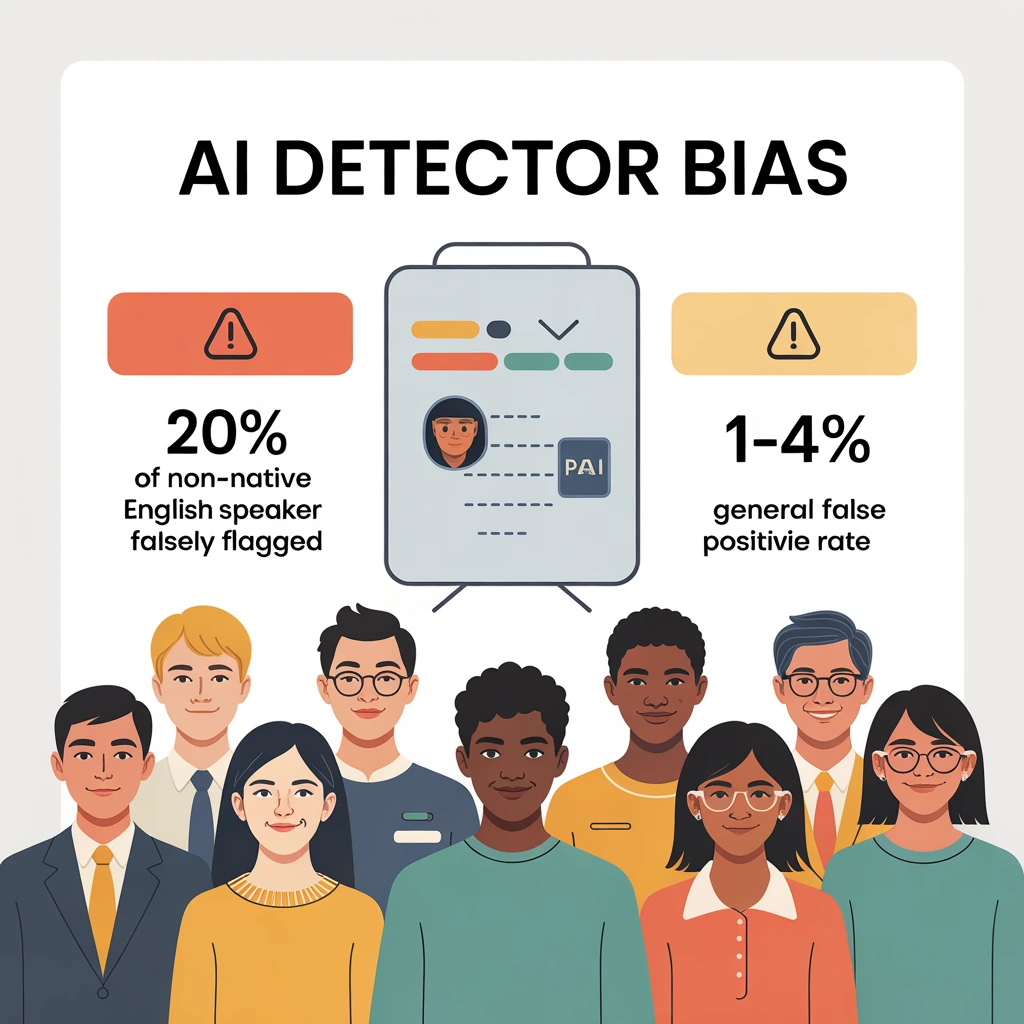

But here’s where it gets messy with the detection side. Even a 1% false positive rate? That’s way worse than it sounds. Vanderbilt University did the math: with 75,000 papers submitted annually, 1% means about 750 innocent students getting accused of cheating when they didn’t.

Think about that for a second. 750 kids who did their own work, getting flagged.

University of Pittsburgh went even further. Their Teaching Center basically said current detection tech isn’t reliable enough to use without major risks. They shut off the detection features completely. Just… turned them off.

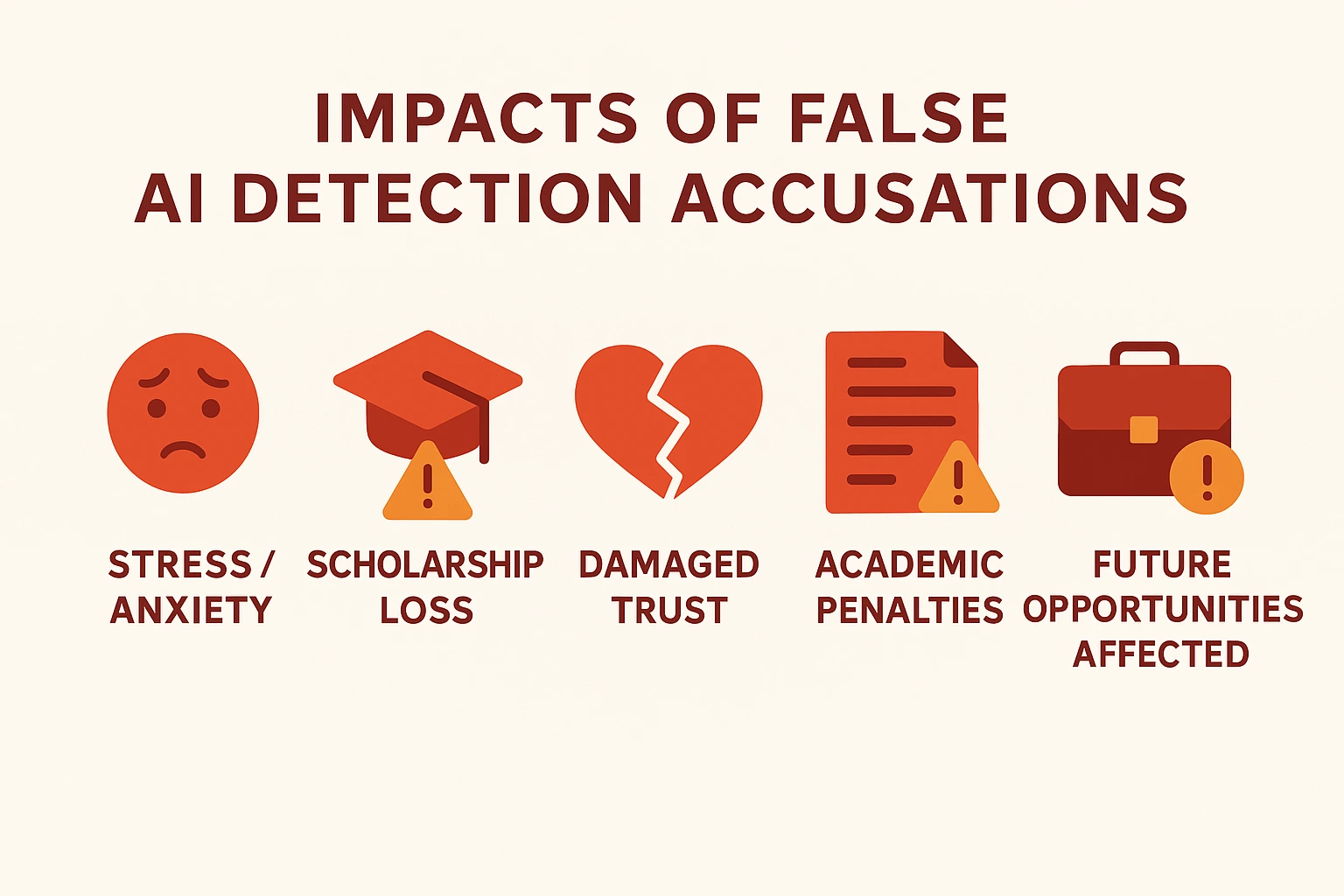

False accusations wreck student trust permanently. Educational psychology research shows this leads to stress, anxiety, confidence issues. Some students face actual penalties or lose scholarships over work they genuinely wrote themselves.

The Hidden Bias Problem: Non-Native English Speakers Getting Screwed

This part honestly made me angry when reading the Stanford research. Multiple AI detector tools for teachers show serious bias against students who aren’t native English speakers.

The data’s pretty damning. Stanford’s Human-Centered AI institute found that 1 in 5 essays by non-native speakers got flagged as AI when they were 100% human-written. And we’re not talking about one detector messing up. These essays were unanimously flagged by multiple tools. Almost all of them got caught by at least one detector.

Why’s this happening? ESL students use simple sentence structures because that’s literally how language learning works. The common phrases taught in English classes trigger false positives. Grammar checkers like Grammarly (which tons of students use) can make writing seem more “AI-like” to these detectors.

It gets worse. Common Sense Media reported that Black students get accused of AI plagiarism by teachers at higher rates compared to other students. We’re talking about equity problems on top of accuracy problems.

GPTZero: The One Everyone’s Using

GPTZero’s got over 8 million users at this point, with 300,000+ being actual teachers. It ranked #1 on G2 for trust (beating even Grammarly, which is saying something).

What makes GPTZero decent:

- They claim 99% accuracy, and Tom’s Guide backed that up when they compared it to competitors

- Shows you exactly where the AI is in the text with color coding, not just a percentage

- Explains WHY it flagged something instead of being a black box

- Can spot mixed essays (part human, part AI) with like 96.5% accuracy supposedly

- Free version lets you scan stuff under 10,000 characters without even making an account

- Works with Google Classroom if you upgrade

The annoying parts:

- Character limits on free version mean you can’t check longer papers

- Need institutional accounts for the good features

- Teachers in forums still report false positives happening sometimes

Pricing’s all over the place depending on school size. Gotta contact them directly for bulk deals.

Winston AI: That 99.98% Accuracy Claim Though

Winston markets itself hard with that 99.98% accuracy number. It’s designed for teachers, writers, and publishers, the whole content integrity crowd.

What’s supposedly good about it:

- Gold Penguin found it best at catching true positives (91.92% in their tests)

- Has plagiarism checking built in too

- Dashboard lets you organize student work pretty easily

- They update it regularly to catch new AI models

- Works in tons of languages: Spanish, French, Arabic, Chinese, Dutch, etc.

Reality check:

- That 99.98% probably doesn’t hold up in real classrooms based on what teachers say

- Costs around $12/month per person

- Needs at least 50 words to work right

It’s got a good balance between being accurate and easy to use according to most teacher reviews. Just… take that 99.98% with some salt.

Copyleaks: Cornell Said It’s Best

Here’s one that’s actually got serious academic backing. Cornell University researchers published a study on arXiv (July 2023) declaring Copyleaks the most accurate at detecting AI-generated text.

Why it stands out:

- Cornell verified it works well across different AI models

- Claims 99.12% accuracy and actually has documentation backing it up

- Gold Penguin ranked it third for true positives, second for avoiding false flags

- Works in 15+ languages

- Does plagiarism + AI checking in one tool

- Connects to learning management systems

- Has a free plan for individual teachers

Setup stuff:

- Pretty simple to get started, takes seconds

- Clear privacy policies for school data

- Regular updates to detection

If you want something research-backed with a proven track record, this is probably your best bet.

Turnitin: Oh Boy, This One’s Controversial

Turnitin’s the big name in plagiarism detection. Everyone knows it. But their AI checker? Man, that sparked some serious drama.

What actually happened at universities:

Vanderbilt looked at the numbers and went “nope.” They calculated that 1% false positives meant 750 innocent students getting accused every year. The tool rolled out with less than 24 hours warning and no way to turn it off initially. They eventually just disabled it.

University of Pittsburgh’s Teaching Center put out official guidance saying detection isn’t reliable enough. They shut it off completely and don’t recommend ANY AI detectors currently.

The Washington Post and Rolling Stone documented multiple cases where students got falsely accused. Real students, real damage to their academic records.

What Turnitin says about their tool:

- 98% accurate supposedly

- Less than 1% false positives for papers with over 20% AI detected

- Some independent studies showed “very high accuracy”

- They’ll accept 15% false negatives to avoid false positives

The problems people point out:

- Won’t explain exactly how it works

- Need to talk to sales reps, can’t just buy it yourself

- No individual licenses

- Only works with other Turnitin stuff

- Won’t tell you the price publicly

Look, lots of schools already have Turnitin for plagiarism checking. Adding AI detection seems convenient. But given that multiple universities literally turned it off and those false accusation cases… maybe be really careful with this one.

Pangram Labs: They Claim Lowest False Positives

Pangram came out of research teams from Google, Tesla, and Stanford. Their whole thing is reducing false positives through some technical methods.

Their approach:

- Say they’ve got 1 in 10,000 false positive rate, way lower than others

- Academic studies showed Pangram “significantly outperforms” other detectors

- Uses something called mirror prompting and active learning (it’s in their technical reports)

- Works best on texts over a few hundred words

- Over 99% accuracy with almost no false flags they claim

- Handles multiple languages

What teachers said:

One teacher said “students are so convinced of its accuracy that it seems to be an even greater deterrent.” Another mentioned “minimal false positives and negatives” after using it extensively.

Practical stuff:

- Simple interface

- Upload files or paste text

- Need at least 50 words

- GDPR compliant and all that

If not falsely accusing students is your number one concern (and honestly it should be), this one’s worth looking at.

Originality.AI: Meh, There Are Better Options

Originally made for web publishers and content creators. Some teachers use it but it’s not really designed for classrooms.

What it’s got:

- Claims 98.2% accuracy

- Independent tests put it closer to 90% realistically

- Has plagiarism + AI detection

- Bulk scanning for multiple docs

- API if you want to integrate it

Why it’s not ideal for teachers:

- Wasn’t built for education

- Lower accuracy than education-focused tools

- Pricing aimed at content creators not teachers

It works, but you’ve got better options if you’re in education.

Scribbr: Free and Unlimited, But Only Average Accuracy

Scribbr’s known for grammar and editing help, similar to Grammarly. They moved into AI detection like everyone else.

Here’s the interesting part: Scribbr actually admits upfront their free AI checker has “average accuracy.” Most companies wouldn’t say that publicly.

What makes Scribbr different

Scribbr lets you run free AI checks in the browser without creating an account. Each check has a practical length limit, so long papers may need to be split. They’re upfront about it: the free checker is “average accuracy.” If you need more, there’s a paid option and plagiarism services you can buy per document. The name is already familiar on campus because of the citation and editing tools, which makes it easy to introduce in class.

The reality for teachers

Use it as a quick first look, not a verdict. Scores are signals, not proof, and you will see misses in both directions. For anything high-stakes, check the same text with another tool and ask for process evidence like drafts or revision history. If you need a full plagiarism readout, plan for the paid report instead of relying on the free pass. That way you keep costs low and reduce the chance of a bad call.

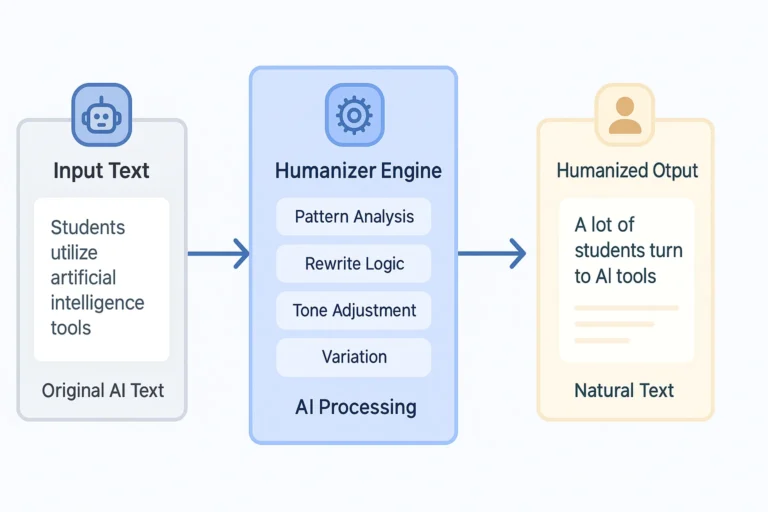

What Researchers Say: Perfect Detection Might Be Impossible

Here’s the uncomfortable truth from the academic side. University of Maryland researcher Soheil Feizi says if we’re using detectors on students, the error rate should be 0.01% false positives. That’s 1 wrong accusation per 10,000 papers.

When’s that gonna happen? “At this point, it’s impossible,” he told The Washington Post straight up.

The core issue according to research: AI-generated text and human-generated text are getting more similar over time. As language models improve, telling them apart gets harder, not easier.

“We should just get used to the fact that we won’t be able to reliably tell if a document is either written by AI, partially written by AI, edited by AI, or by humans,” Feizi explained.

His take? “We should adapt our education system to not police the use of AI models, but basically embrace it to help students to use it and learn from it.”

That’s… actually pretty reasonable when you think about it.

How Teachers Can Use Detectors Without Screwing It Up

If you’re gonna use AI detector tools for teachers despite all the problems, here’s how to minimize the damage:

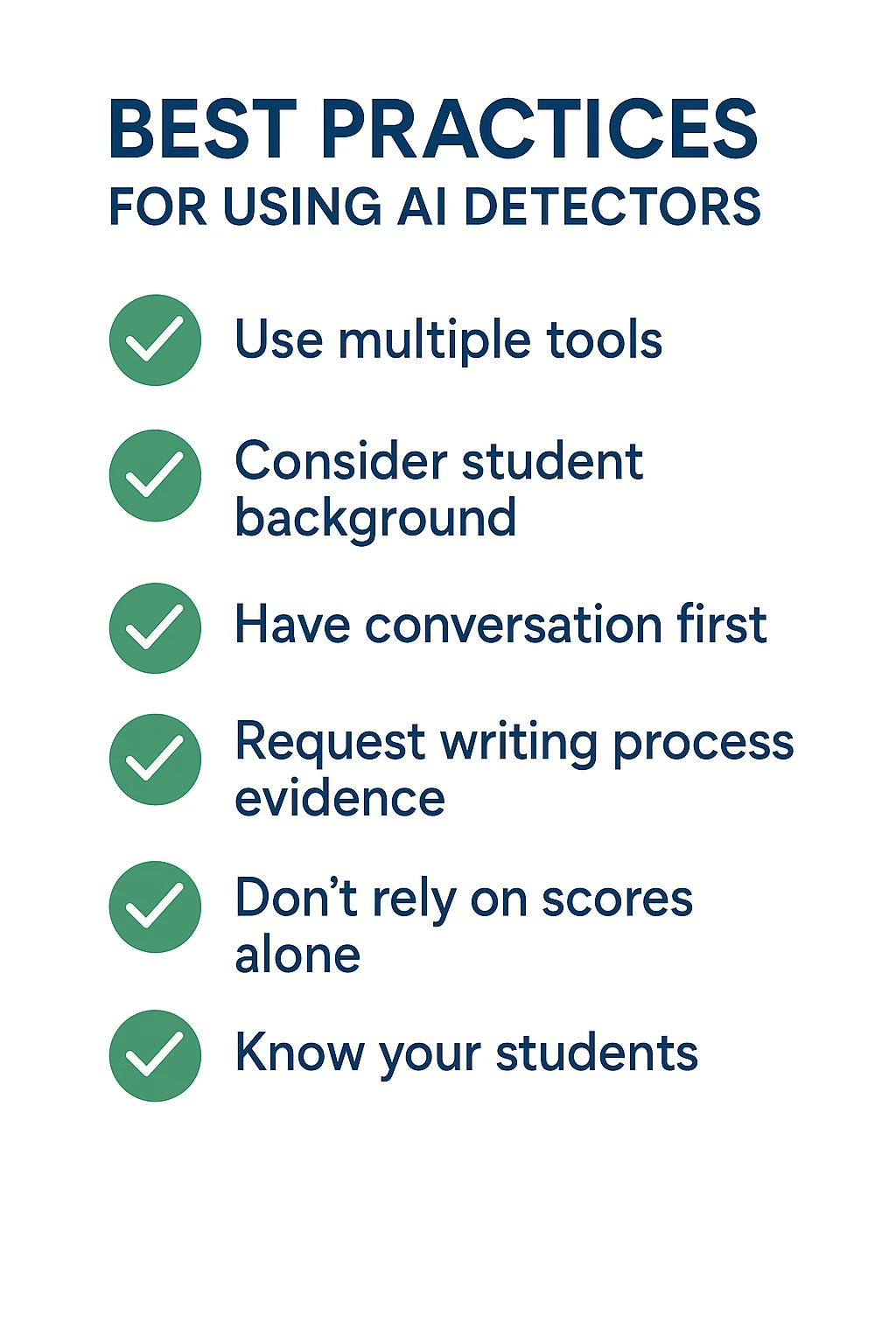

Don’t Trust the Scores by Themselves

Even Turnitin’s Chief Product Officer said detection is “only one small piece of the puzzle.” The biggest piece? Your actual relationship with students.

Know your students. Recognize their writing style. Build the kind of classroom where kids don’t wanna cheat in the first place.

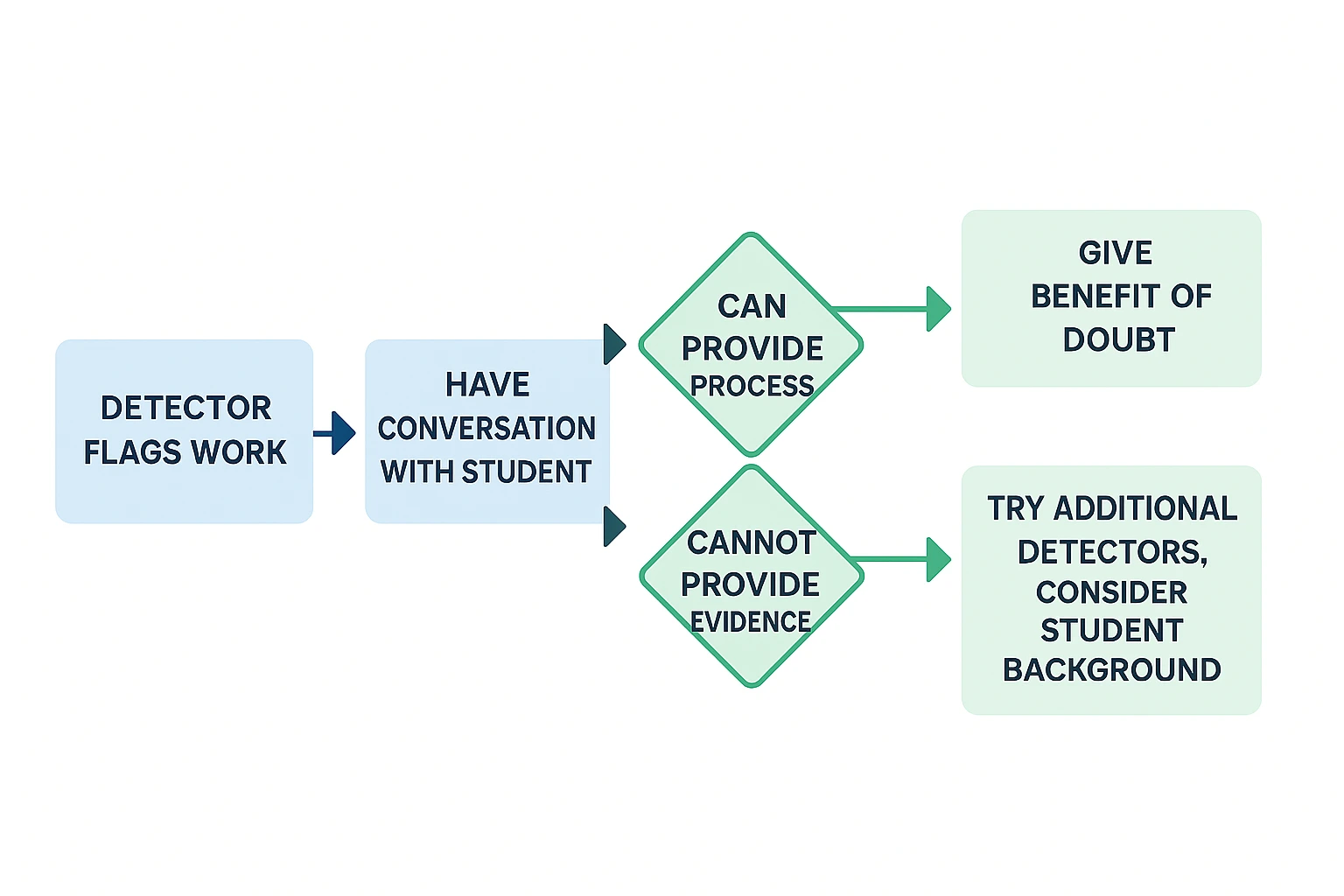

Start With Questions, Not Accusations

If something gets flagged, have a conversation. Don’t go in assuming they cheated. Ask about their process.

Request evidence: Google Docs history, drafts, notes. Honest students can usually show their work. And if they can’t? Maybe they’re telling the truth anyway and just didn’t keep records.

Think About Student Background

Remember that Stanford study. Non-native English speakers get flagged way more. Students using Grammarly might trigger false positives. Kids with ADHD, dyslexia, or autism get flagged more too according to research.

Context matters more than percentages.

Try Multiple Tools If You’re Checking

Different detectors work differently. If one flags something, try another. If they disagree significantly, that’s your sign there’s uncertainty.

Give the benefit of the doubt in those cases.

Focus on Teaching, Not Policing

More and more research says we should teach kids how to use AI responsibly instead of trying to ban it completely.

Design assignments that need personal insight. Require analysis that AI can’t really do well. Focus on critical thinking that shows understanding.

The Bottom Line on All This

So here’s what we know from actual research and real classroom experiences:

Best performing tools based on what’s documented: GPTZero (8M+ users, claims 99%), Winston AI (that 99.98% number), Copyleaks (Cornell backed it), and Pangram Labs (lowest false positive claims). Turnitin’s everywhere but controversial. Scribbr’s free and unlimited if you’re on zero budget, though they admit it’s only average accuracy.

But every single one has risks. False positives hurt innocent kids. There’s documented bias against non-native English speakers. Multiple major universities straight-up disabled these tools after seeing the problems.

Perfect detection might literally be impossible as AI keeps improving. The gap between AI writing and human writing keeps shrinking.

If you decide to use AI detector tools for teachers, treat them like one piece of evidence, not proof. Combine with knowing your students, having actual conversations, and asking for documentation.

Honestly? The better long-term move according to researchers is probably teaching responsible AI use instead of trying to catch every instance of it. Design assignments that need personal insight. Build critical thinking that AI sucks at replicating.

Technology’s not gonna stop advancing. Our teaching methods need to adapt while keeping academic integrity and supporting real learning.

That’s it. That’s the actual situation based on what research and educators are finding.

Important Reminder: This analysis reflects current research and documented experiences as available from published sources. Detection technology evolves rapidly. Schools should evaluate their specific needs, student populations, and institutional values before implementing any AI detection systems. When in doubt, prioritize student relationships and educational conversations over technological enforcement.

Disclosure: This article contains affiliate links to some platforms reviewed. Commissions may be earned from purchases made through these links at no additional cost to readers. All platforms underwent the same research methodology examining published studies, institutional decisions, documented educator feedback, and verified accuracy claims. No platform received favorable treatment in exchange for affiliate relationships. All assessments reflect analysis of available evidence from university research, educational forums, and published reports rather than promotional material.

Your preferences will be different per individual needs. This is just one perspective based on specific criteria according to this analysis. That’s why starting with the free tiers first is emphasized per best practices. See what actually works for YOU based on your priorities.

And seriously, don’t share personal info with AI according to privacy guidance. Seems obvious but people do risky things online per security reports.

Disclosure: This article contains affiliate links. If you sign up through these links, a commission may be earned at no extra cost to you. All platforms mentioned were researched through user reviews, documentation analysis, and community feedback. All opinions reflect research findings and user consensus.

© SpotNewAI.com | Finding AI tools that actually work