Catastrophic AI Risks for Normal People: 5 Hidden Dangers

Catastrophic AI Risks For Normal People (Without The Sci Fi Drama)

The quiet ways AI tools can mess with your grades, job, and reputation

⚠️ Real Risks, Real Solutions

Most people hear “catastrophic AI risks” and instantly think of films. Killer robots, glowing red eyes, some superintelligence that wakes up and decides humans are a bug to remove.

That makes good headlines, but it hides the kind of risks that can already hurt normal people right now. Students, freelancers, small creators, office workers, teachers. You don’t need a runaway superintelligence for something to feel catastrophic. Sometimes one accusation or one careless AI shortcut is enough.

What People Usually Mean By “Catastrophic AI Risks”

When researchers and policymakers talk about catastrophic AI risks, they usually mean large scale damage that affects whole societies, not just one person. You see the same themes again and again:

- Very powerful AI systems that humans cannot reliably control

- Bad actors using AI to push targeted disinformation at massive scale

- Automation changing job markets faster than people can retrain

- Advanced models deployed without proper safety testing or oversight

These topics matter a lot. The problem is that they feel far away when you are just trying to pass a module, keep a client happy or write an email that does not sound awkward.

From an individual point of view, the “catastrophe” might be much smaller and much more personal. A ruined semester. A lost job opportunity. A damaged reputation that follows you around when you apply for something else. If you want a clear, high-level overview of catastrophic AI risks at a society-wide level, the Center for AI Safety has a helpful summary here.

The Everyday Catastrophic AI Risks Nobody Talks About

There is another layer of catastrophic AI risks that rarely makes the news. These are not about the end of the world. They are about situations where AI quietly makes your own life harder or more fragile.

1. False accusations from AI detectors

Imagine this situation.

You stay up late and write an essay, article or report from scratch. Maybe you use AI once for basic spell check, but the ideas and sentences are yours. You submit the work. Someone in charge runs it through an AI detector.

The screen shows a label such as ‘High likelihood of AI-generated content’.

If that person trusts the score blindly, things can go wrong very fast:

- Students can be accused of academic misconduct

- Freelancers can lose a contract or get a bad note on their profile

- Employees can lose trust from their manager or team

The scary part is the combination of three things:

- Detectors sometimes give false positives on genuine human writing

- Many schools and companies do not have a clear appeals process

- Power is one sided, so people are scared to push back

For the person being accused, this can feel catastrophic. It can affect grades, references, visas, promotions and even mental health. The detector goes back to sleep after printing a score. You are the one who sits in the meeting.

2. Pasting sensitive data into AI tools

Another quiet risk appears when people treat AI tools like a private diary that nobody will ever read.

It feels natural in the moment. You copy and paste:

- A thesis chapter

- A client proposal

- A draft contract

- A very personal email

Then you ask the model to polish it, translate it or rewrite it in a better tone.

Once that text is inside a system, you lose direct control over it. Depending on the tool and settings, several things can happen:

- The text might be stored in logs for troubleshooting or model improvement

- Specific staff members could see snippets as part of their job

- A future data breach or policy change could expose content you thought was safe

For researchers, this might mean unpublished ideas leaking early. For freelancers and employees, it can mean confidential prices, legal details or client names appearing where they should not. For ordinary people, it can be very private conversations or health issues stored in places they never intended.

3. Overusing AI humanizers as a disguise

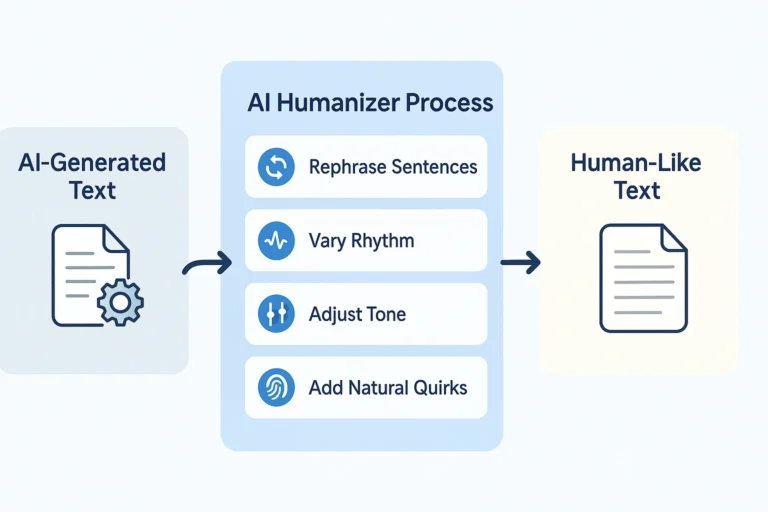

As AI detectors spread, more people are turning to “humanizer” tools and rewrites that promise to make AI text “undetectable“.

Used carefully, rewriting tools can actually help. They can improve clarity, fix grammar and adjust tone, especially for non native speakers. The risk starts when they are used mainly as a disguise.

Typical warning signs look like this:

- You paste entire essays into a humanizer instead of sections

- You accept the output without really reading it

- You hand in text that you do not fully understand or remember crafting

If a teacher, client or manager then asks you basic follow up questions, you may struggle to explain how you got to that wording or that argument. At that point the problem is not just “AI use”. It is that you cannot stand behind your own work.

In a worst case scenario, this looks more suspicious than honest, limited use of a writing assistant.

4. Slow erosion of your own skills

There is a quieter catastrophic AI risk that takes months or years to show up. It is the slow erosion of basic skills.

At first, asking an AI to “rewrite this in better English” feels harmless. Over time, you might notice that:

- It is harder to start writing from a blank page without a prompt

- Your natural style starts to sound like a generic model instead of you

- You feel less confident in exams, interviews or live presentations where AI cannot help

This is not dramatic enough for headlines, but for one person it matters a lot. You can end up feeling dependent on a tool for tasks you used to handle on your own. That dependence can itself become a catastrophic risk if the tool is removed or restricted in a key moment.

5. Reputation damage that sticks

Online reputation is fragile. One awkward AI generated LinkedIn post or one tone deaf automated email can do more damage than you expect, especially in small professional circles.

Even if you delete it, screenshots live on. People remember that you sounded robotic or fake in a moment where authenticity really mattered.

For small creators, job seekers and solo professionals who rely on personal branding, that kind of reputation hit is not a small detail. It can influence who gets hired, who gets recommended and who is quietly avoided.

How To Use AI Without Inviting Disaster

The good news is that you do not need to be a technical expert to avoid most of these risks. A few practical habits already put you ahead of many users.

Use AI as an assistant, not as a ghostwriter

AI works best when it supports your thinking instead of replacing it.

Safe and useful ways to use AI include:

- Brainstorming ideas or outlines when you feel stuck

- Getting alternative ways to phrase a sentence you already wrote

- Checking grammar and clarity after you finish a draft

As a simple rule, if you cannot explain a paragraph in your own words, you relied too much on the tool. That is exactly when trouble starts if someone questions your work.

Keep a simple record of your process

If you are in a school or workplace that uses AI detectors, it is smart to keep light evidence of your process. Nothing complicated. Things like:

- Photos of handwritten notes or early plans

- Older document versions that show how your text evolved

- Short logs of when you used AI and for what type of help

If a detector ever flags your work, this kind of material can turn the situation from “prove you did not cheat” into “let us look at your process“. That is a much healthier conversation.

Think carefully before pasting confidential text

Before you drop something into any AI box, ask yourself one clear question.

“Would I be comfortable if this exact text was accidentally shared with my teacher, boss, client or a stranger?“

If the answer is no, consider one of these options:

- Remove names, addresses, ID numbers and private details

- Change sensitive numbers or specific examples

- Summarise the idea instead of pasting full paragraphs

You can also look for tools or modes with stronger privacy controls for legal, medical or financial information. Even then, the basic “what if this leaked” test is worth keeping.

Use humanizers for polish, not for hiding

Rewriting and humanizing tools are safest when you treat them like a style assistant, not like a way to “beat” detectors.

Good use looks like:

- You write the first version yourself

- You send short sections, not entire documents

- You read the output slowly and edit it until it sounds like you

If you find yourself pasting everything, accepting everything and hoping nobody checks, that is a red flag. You are giving the tool more control over your reputation than it deserves.

🎯 Catastrophic AI Risks From The Ground Level

From the outside, catastrophic AI risks sound like huge, abstract scenarios. From the ground level, they are often much smaller, but much more personal.

- A false accusation that puts your degree or job at risk

- A private document that travels further than it should

- A slow loss of confidence in your own skills

- A reputation that gets tied to clumsy AI use instead of your real work

You cannot fully control which models big companies build next, but you do have real control over how you use those models in your daily life.

You decide when AI is welcome in your process, and when you want to write, think and speak without it. You decide how transparent you are with teachers, clients or managers. You decide what goes into the prompt box and what stays offline.

If you keep AI in the role of helper instead of hero, you lower your exposure to the quiet, personal disasters that never trend on social media but matter a lot when they land in your inbox. And you keep the most important thing where it belongs: your name and your reputation attached to work you actually understand and stand behind.

✅ Quick safety checklist

- Use AI as an assistant for brainstorming, phrasing, and grammar, not as a ghostwriter

- Keep process evidence: notes, drafts, version history

- Ask “would I be comfortable if this leaked?” before pasting sensitive data

- Use humanizers for polish on short sections, not to hide entire documents

- If you can’t explain a paragraph in your own words, you relied too much on AI

- Practice writing regularly without AI to maintain your skills